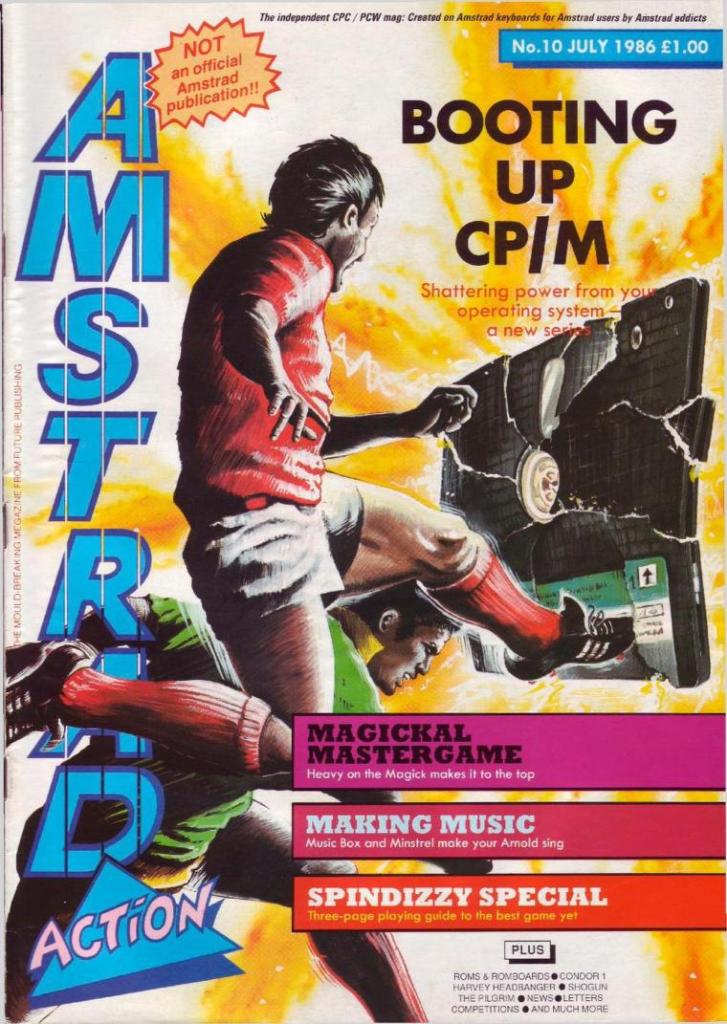

Future Publishing: The Somerton Years

Matt Nicholson remembers the early days of Amstrad Action and the birth of PC PLUS

First published in Issue 8, the Christmas Special 2022 edition of Pixel Addict magazine

It was early 1986 and I’d been working at VNU Publishing in the centre of London for a few years as editor of What Micro, which at the time was the industry’s only ‘buyers guide’ magazine. The microcomputer industry had been growing at some 30 per cent a year and money was bubbling up everywhere. This was the world of corporate publishing with lavish expense accounts and even more lavish lunches. It was amazing how many problems could only be sorted out over a bottle of half-decent wine and a slap-up meal in a top-notch Soho restaurant. There were stories of teenage programmers buying Ferraris which had to be stashed in the garage until they were old enough to drive them, and everyone had an angle.

But as 1985 turned into 1986 the industry slowed to a more normal growth rate, and VNU was tightening its belt. A lot of the early excitement had gone and I was getting bored. It was also becoming obvious that the money was in publishing, not writing, and I was beginning to think about setting up my own company.

Chris Anderson had joined VNU as editor of Personal Computer Games (PCG) around the same time as myself. For a while we were the ‘new editors on the block’ and on nodding terms, but he then left and rumour had it he had set up his own company somewhere deep in the West Country. Like many restless journalists of the time, I browsed the job ads in the Media edition of The Guardian every Thursday, and I couldn’t help but notice the postage-stamp sized advert for an editor for Amstrad Action when it appeared.

I couldn’t imagine my wife would be interested in abandoning our new flat in West London to bring up our new daughter in some two-bit town in the middle of Somerset, but I had a conversation with Mike Carroll, who sold advertising for Chris’s company from London on a commission basis, and he persuaded me that Chris was up to something interesting. I decided to pay him a visit.

Read more…How secure is the cloud?

This is my editorial for the Spring 2017 issue of HardCopy magazine:

Image by HypnoArt via Pixbay

Security and privacy are two aspects of an issue that has long bugged our society. On the one hand most of us consider that we have a right to a private life, and indeed Article 12 of the Universal Declaration of Human Rights explicitly states that “No-one shall be subjected to arbitrary interference with his privacy, family, home or correspondence, nor to attacks upon his honor and reputation.” The right is implicit in the American Constitution, while Article 8 of the European Convention on Human Rights is more pragmatic in that, though explicitly stated, the right is moderated by the needs of a “democratic society” with regards to national security and crime prevention.

Perhaps unsurprisingly, the situation in the UK is less clear, particularly since the fiasco that is the Investigatory Powers Act 2016 (aka the ‘Snooper’s Charter’). Read more…

Out of our hands

This is my editorial for the Winter 2016 issue of HardCopy magazine:

In February 2009, in an effort to demonstrate exactly how far we are willing to obey a seductive voice emanating from a plastic box, the driver of a 50-foot articulated lorry wedged his vehicle so thoroughly into a hair-pin bend in the tiny Cotswold village of Syde that it took five days to extricate. In the light of this and other such examples, I am heartened by the news that Google, Amazon, Facebook, Microsoft and IBM have announced the Partnership on Artificial Intelligence to Benefit People and Society in order to look into the ethical and societal implications of such technologies.

Many of these implications stem from the lack of human involvement in the decisions that such technologies are increasingly making. Driverless cars are almost upon us, and by most accounts orders of magnitude safer than human drivers, particularly in cities where they can communicate with each other to better understand the dangers ahead. However, on 7 May this year a Model S Tesla in driverless mode smashed at high speed into an 18-wheel truck and trailer, killing the ‘driver’ instantly. It looks as though the car was unable to distinguish the white truck and trailer against the bright Florida sky behind, something that most human drivers would be able to do ‘without thinking’. It is this that

makes such accidents seem ‘inhuman’. Read more…

This is my editorial for the Summer 2016 issue of HardCopy magazine:

The Microsoft Apps on Android.

In March 1995, some five years after Tim Berners-Lee created the first World Wide Web server, Microsoft announced a new “design environment for online applications” codenamed ‘Blackbird’ which would allow developers to create content for the forthcoming Microsoft Network. MSN was promised to be “more sophisticated” than anything the nascent World Wide Web could offer, and accessible directly from the desktop of Windows 95, which was launched that August. However, in the intervening months, Bill Gates had a change of heart, taking Microsoft through what BusinessWeek described as “a massive about-face.” By the time Windows 95 launched, ‘Blackbird’ had become Internet Studio, MSN was just another website, and Windows NT was showing off its new Internet Information Server (IIS). Read more…

Unnecessary developments

This is my editorial for the Spring 2016 issue of HardCopy magazine:

I recently attended a software conference. You know the sort of thing: a couple of keynotes in the morning, followed by breakout sessions running in parallel across a bunch of rooms through the afternoon. Normally I don’t have trouble with such things: I go onto the website, look at the schedule, perhaps print out a few pages, and my day is organised. However this time it was different. This time I was presented with a singularly uninformative website which exhorted me to download and install the conference scheduling app, which appeared only to be available for smartphone. As I was concerned that I might have to book the breakout sessions I wanted to attend – there being nothing on the website to imply otherwise – I duly downloaded and installed. Read more…

I recently attended a software conference. You know the sort of thing: a couple of keynotes in the morning, followed by breakout sessions running in parallel across a bunch of rooms through the afternoon. Normally I don’t have trouble with such things: I go onto the website, look at the schedule, perhaps print out a few pages, and my day is organised. However this time it was different. This time I was presented with a singularly uninformative website which exhorted me to download and install the conference scheduling app, which appeared only to be available for smartphone. As I was concerned that I might have to book the breakout sessions I wanted to attend – there being nothing on the website to imply otherwise – I duly downloaded and installed. Read more…

Selling your identity

This is my editorial for the Winter 2015 issue of HardCopy magazine:

One place that’s not interested in your personal identity (photo by WiNG)

There are some things we’re happy to pay for, and some things we’re not, and in the digital world, there’s little rhyme or reason between the two. I am quite happy to pay the BBC nearly £150 a year for the privilege of watching a handful of TV channels without being interrupted by inane advertising, and up until just a few years ago, there were enough people prepared to pay for mobile phone ringtones to create a billion dollar industry. And yet we still seem unwilling to pay anyone for accessing their website, preferring instead to enter into an ambiguous and often downright dangerous relationship with a largely unknown collection of marketing companies.

Walk into a cinema, buy a ticket with cash, and you can watch a film without the cinema having any idea who you are. As long as you’ve got a valid ticket, they’re happy. Even if you pay by credit card, the cinema would have to take deliberate and indeed illegal steps to intercept the data transferred between you and the credit card company. Buy a ticket through the same cinema’s website, though, and the chances are that you will be asked, at the very least, for your email address, so providing them with a unique key that can be linked to any other personal data held by any other website that has your email address. Read more…

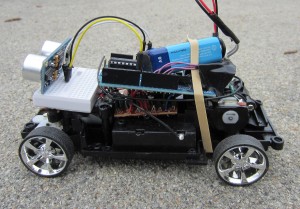

The Internet of Things

This is my editorial for the Summer 2015 issue of HardCopy magazine:

It seems inevitable that the next bandwagon coming our way has ‘Internet of Things’ written on it in very large letters, just as the previous one proclaimed ‘The Cloud’ as the universal solution, and the one before insisted that ‘Services’ were the answer to everything. Once again, we can expect every new product and service to boast an association with the new buzzword, even if it’s just a humble cable-tie.

Which is, of course, not to say that we shouldn’t take the Internet of Things (IoT) seriously. After all, considering our servers to be deliverers of services, and then delivering them through the Internet, did open up new possibilities not only in terms of technology but also in transformative business models. Regardless of the hype, IoT does represent a major paradigm shift, in that it invites a new way of thinking about the Internet, and opens up whole new worlds of both possibilities and considerations. Read more…

Intel and the Internet of Things

Matt Nicholson caught up with Intel’s James Reinders at the iStep 2015 conference, held mid-April in Seville.

Matt: We’re hearing a lot about the Internet of Things (IoT) these days. What do you consider to be its distinguishing features?

Matt: We’re hearing a lot about the Internet of Things (IoT) these days. What do you consider to be its distinguishing features?

James: First of all it’s an explosion of innovation in the devices that one can build, but what fuels it is the enormous computing power that anything can access because of its connection to the Internet. So the ‘things’ that make up the IoT don’t need to be massive computers. Instead they can be the most itty-bitty little things, and it’s their connection to other computers that gives them the power. So it’s a logical extension of where we’ve been going for a long time, but suddenly a lot of things are clicking in place. Intel gets to help through our processor technology and design capabilities which allow us to build things like our Quark processor line: very small but extraordinarily powerful compute devices that can be embedded in things that are as small as buttons. Read more…

The most effective way you can enhance the performance of your PC is to replace its existing hard disk with a Solid State Drive (SSD). Many of the articles covering this subject make the process sound fairly daunting, however the more recent devices around come with utilities that make it much easier. With 250GB SSDs now available for less than £100, it is well worth doing.

The most effective way you can enhance the performance of your PC is to replace its existing hard disk with a Solid State Drive (SSD). Many of the articles covering this subject make the process sound fairly daunting, however the more recent devices around come with utilities that make it much easier. With 250GB SSDs now available for less than £100, it is well worth doing.

You can choose to reinstall Windows and all your applications on to your new SSD from scratch, but for most it makes more sense to ‘clone’ the contents of your existing hard disk, which has the advantage of maintaining your existing working environment. The process does take time but, provided your machine is not more than five or six years old, need not be as daunting as much of the available advice suggests. The following relates to the installation of a Samsung 850 EVO 2.5-inch 250GB SSD into a Dell Vostro 430 running Windows 7. It also assumes that the connection to your existing drive is either SATA II or SATA III (if in doubt, check the specification or open up the case and check the connections). Read more…

We know what’s good for you

This is my editorial for the Spring 2015 issue of HardCopy magazine:

Recently I powered up my Windows Phone to discover that it had downloaded and installed a new app – not something I’d chosen for myself, you understand, but something that Microsoft obviously thought I needed. I am talking about Cortana, which (I assume) has appeared on countless other Windows Phones as well.

Recently I powered up my Windows Phone to discover that it had downloaded and installed a new app – not something I’d chosen for myself, you understand, but something that Microsoft obviously thought I needed. I am talking about Cortana, which (I assume) has appeared on countless other Windows Phones as well.

For those not blessed with a Windows Phone, Cortana is Microsoft’s answer to Apple’s Siri, Google Now or Amazon Echo in that it’s an Intelligent Personal Assistant, designed to feed you information tailored to your personal needs and desires. Such information can range from a reminder that you’ve got an appointment with your boss, to a notification that your favourite band is playing in Barcelona at the same time as you’re planning a short break, and a link to the ticket office, the airline, and a bijoux hotel close to the venue that it thinks you might like. Read more…